Real-time Ray Tracing in JavaScript

A ray tracer is a program that renders a 3-D scene by firing virtual rays from the camera and computing their intersections with the objects in the scene (like echolocation). Normally, this is done on a per-pixel basis.

This process is known for being too slow for real-time applications. Yesterday I had an idea for a ray tracer: what if you could render more salient parts of the image with higher resolution? Areas of the image with low contrast can be approximated with solid colors and use fewer rays (which are computationally expensive). Can we feasibly sacrifice image quality for speed, and, if so, can this be done in real-time?

I was eager to find out. Perhaps the quickest way to plot pixels on the screen is to use the HTML canvas element with JavaScript, so I wrote my algorithm as a web demo (using CoffeeScript instead of JavaScript). The algorithm recursively renders rectangular regions of the image as follows:

- Choose several pixels spaced uniformly throughout the region. Sample the color of these pixels by firing rays.

- Determine the mean and variance of the colors, where the metric is Euclidian distance in RGB space. The variance is our estimate for visual saliency.

- If the variance is low, render the entire region as a solid rectangle whose color is the mean of the samples from the previous step.

- If the variance is high, split the rectangle in half (either horizontally or vertically, depending on which dimension is longer) and recurse on both halves. When recursing on each half, the appropriate samples from the parent calculation are reused (for efficiency).

In order to test the algorithm I set up a test scene that resembles an empty tennis court enclosed by a tall wooden fence on a sunny day. I implemented all the basics for a simple ray tracer: spheres, planes, quads, fog, texture mapping, linear algebra helpers, etc. The result:

One interesting property of this ray tracer is that it the quality of the final image is real-time configurable—we can easily control how many rays are fired per region and the threshold that determines when to stop recursing. I wrote the demo to dynamically adjust the quality of the scene in order to maintain a constant 25 frames per second, which is slow for a video game but still faster than standard 24-FPS video

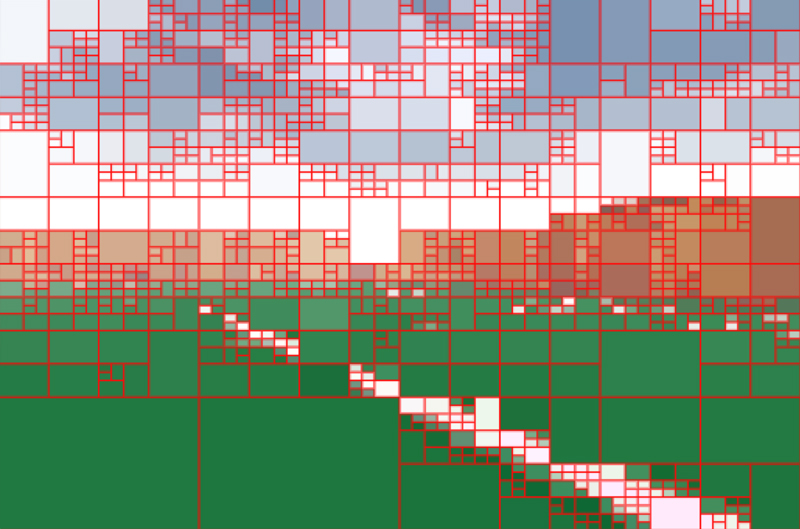

You can see that the algorithm is a form of binary space partitioning in screen space:

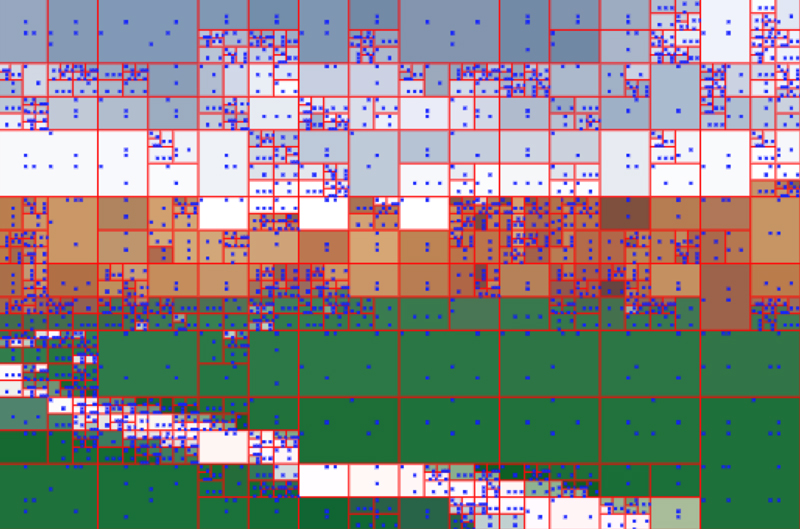

Determining which pixels to sample in each region is an interesting problem. I tried several methods including random sampling, sampling along a circle circumscribed by the region, and dividing the region into rectangles of roughly equal area and sampling the center of each rectangle. Random sampling resulted in the highest quality image averaged over time (i.e., if you could render several frames and take the pointwise mean of each pixel), but caused a lot of visual jittering that was uncomfortable to watch. These are the sampling choices made by the algorithm in one particular frame:

Not the best choices of sample locations, but we don’t have much time to compute these locations (less than 100 microseconds). Notice also how this screenshot is a lower-quality rendering compared to the first one—this is because drawing the lines and dots on top of the image takes extra time, and the program compensated by lowering the render quality in order to maintain a reasonable framerate.

You can try out the demo (use the arrow keys to move), but I must warn you: it is extremely CPU intensive and you will need a fast processor for reasonable quality. With Chrome running on my Intel Core i7, I can see images like the ones above at reasonable framerates.

I’m impressed with how fast JavaScript runtimes have become (especially Chrome’s V8). On my laptop, the demo fires ~7000 rays per second and each ray undergoes an intersection test with every object in the scene. The code is optimized for readability rather than efficiency and performs a lot of memory allocation each frame, yet still manages to run at about 30 frames per second. Running the demo without the "visual saliency" optimization resulted in about about a 40-60x reduction in framerate. I suspect that this technique may actually be a viable method of real-time rendering if it was written in a compiled programming language to target a GPU, so I conclude that my experiment was a success.

This was just a quick experiment, but there are a number of improvements that could be made, including:

- Use web workers to parallelize the rendering.

- Determine the sample variance in a better color space, such as L*a*b*.

- Use WebGL instead of canvas.

- Features: More primitives like triangle meshes, cubes, etc. Lighting, shading, shadows, reflections, refraction. Hierarchical scene graph. Animations. Etc...

- Use CUDA or OpenCL to take advantage of GPU hardware.

Links:

- Source code on GitHub

- Demo courtesy of Heroku

[1] Video can get away with lower framerates better because of the temporal smoothing of each frame.

Posted on March 30, 2013.